Editor's Choice

Featured in Tech

Telecom & Internet

Home & Design

Real Estate Agency & Brokerage Practices in India – Trends / Tips

A reputation for integrity and ethical business conduct will be quite important in agency business anywhere in the world. Finally, if you works hard, plays hard and thinks fast – success in somewhere near you. Successful people are tenacious, dependable, inspiring, helpful and highly driven. Get a Real Estate PR blog and start spreading good things.

Banking / Finance Builders Corruption Infrastructure Investment Law / Legal Lifestyle Listing People Politics Real Estate

Check for resale value when buying home

Another factor to consider is the current rate of development with additions such as shopping malls, grocery stores, or any commercial development, around the area that might put the locality in demand going forward.

Banking / Finance Builders Investment Listing People Real Estate

Truth vs Hype: Real Estate, False Hopes

Feb 12, 2016: Media investigates the darker side of India’s real estate boom – phenomenon of thousands of potential home-owners left stranded by builders with half complete or poorly-built apartments. It’s a malaise not specific to the National Capital Region, but the NCR is the […]

Banking / Finance Builders Corruption Infrastructure Law / Legal Politics Real EstateSports & Lifestyle

Farmers fighting cronies for groundwater

Democracies are slowly stolen by branded political parties resulting in natural protests, revolutions.. don’t either settle with capital market sops offered by oppressing smart leaders and its bhakt gangs as TukTuk cheer leaders

Agriculture Climate Corruption Food / Beverages Infrastructure Lifestyle Manufacturing PoliticsEditor's Release

Refocus Anti-Social Media, AI, War Games, Tech Hype & Smart Corruption for quick bucks!

Until then, the whole show will always be planned and managed by callous bureaucracy as leadership of the day neither have the vision nor are they concerned for the future. Only focus is to retain power and grow wealth of nears and dears with help of mushrooming media gurus, bhakt republics, desi fakers, tantalizers and vicious cobras.

Corruption Economy Entertainment Lifestyle People Politics World विश्वगुरुMovies & Music

ICC Cricket World Cup

Cricket may have its politics and complexities but no one can argue with heavy run scoring or wicket taking.

Entertainment Lifestyle Sports World विश्वगुरु

Govt run Sports Parks for kids / athletes?

Feb 14, 2016: Public water sports cum amusement parks on must talked banks of river Yumuna can help kids / youngsters from middle and low strata or even Govt schools to strive for Olympics while saving the degrading clean water bodies! Apart from fat cricket […]

Entertainment Lifestyle People Politics Sports

Mutated consumer behavior during pandemic to post-pandemic AI consumerism!

Emotional people’s political and social beliefs are rooted in unprocessed trauma, they never mind supporting any gang of crooks, opportunists, racists and aspiring fascists out of a deep-seated need to see their perceived enemies suffer: tight-wing reactionaries delight only when their enemies have been gleefully trolled via any #messiah namely MuskX, ModiG, MurdochR, MadC, MetaF, MediaP, MaharajaI, MukeshA or anything sounding royal..

Corruption Entertainment Lifestyle Media / PR People Politics Technology World विश्वगुरु

Best Fitness Training Courses and Personal Trainer Certification

This allows users to search for an activity they would like to partake in and helps them find an available venue, regardless of the time or day – Fitness trainer course fees, best personal trainer certification, gym trainer courses, personal trainer certification online gym trainer course near me, fssa personal trainer course fees, online fitness courses free with certificate, gym trainer course fees in india

Education Entertainment Health Lifestyle People Startup

Thanks to Covid, DRM has spread fast into mobile apps, art, music, software

We are the system, we are the law,

We are corruption, worm in the core,

One of another, laugh ’til you cry,

Faith unto death or a knife in the eye!

International education platform M Square Media (MSM) welcomes some push for higher education overhaul

Canada-based education company M Square Media (MSM) welcomes a latest major education push, allowing top foreign universities to set up campus shops and award degrees in its bid to enhance the country’s higher education.

Education Entertainment Lifestyle Media / PR People Politics Startup World विश्वगुरुLatest in Blogs

Best Practices For Data Center’s Physical and Digital Security

Biggest security threat comes from inside, not only that it is done deliberately, but it also happens as a result of negligence or profiteering greed of your neighborhood, friends, colleagues and even family. The better the training on data center security, the safer will be overall digital environment for common users..

Infrastructure Law / Legal Linux Software Technology Telecom

Where are REAL black cats of loot? Election time or not, who cares!

More corrupt financial capitalism fostering the culture of scams and collection extortion via suit-boot executives in collusion with cyber cells, call centers and mobile apps mafia. Scams have centered around malpractices in governance: owners and promoters of big companies, political parties and institutions have defrauded citizens to enrich and develop themselves smartly abusing legal, accounting, security, taxation, enforcement, investigative and documentation systems.

Corruption Economy Law / Legal Lifestyle People Politics

Strike back financial terror and its glorified human trafficking

Greatest threat to humanity is the fact that half of the psychopaths are busy in “financing terror” rest are smart sociopaths profiting from “terror in finance”. As guinea pigs, financial revolutions are needed only for 99% commoners, to not fulfill any obligations, to refuse cooperating with the system as it currently exists. Why keep paying our hard-earned rights to the capital mob? We know our resources could be better spent.

Banking / Finance Corruption Economy Infrastructure Law / Legal Lifestyle People Politics Real Estate World विश्वगुरु

Misleading Term AI has Enabled Frauds that help to fool investors and workers

AI Buzzwords That Help Fool Investors and Sometimes Workers.. Many of us who understand the importance of quality (not quantity by mindless automation) will continue to enjoy sharp imagery, not derivative ripoffs that are likely plagiarism with presumption of “AInnocence”.

Lifestyle Linux People Software Startup Technology

Pin-compatible and scalable System on Modules (SoMs)

Aquila AM69 is powered by the TI AM69A Arm-based processor, known for its exceptional performance and reliability

Linux Software Startup Technology

Ladakh is pristine and picture perfect?

If Ladakh is left open to this kind of free-for-all, with no safeguards, mining contractors and greedy gujju colonizers will surely flock-in to conquer nature.. To be a global leader, Ladakh surely deserves being developed as a crown in Himalayan megalopolis, isn’t bhakt? The tremors of your short-gaming government’s smart actions today will be felt by generations after decades, and justice is extrapolated to be surprisingly denied for all.

Climate Corruption Energy Lifestyle People Politics Travel World विश्वगुरुHow pure digital tech are hijacked by greedy agencies and enslaved engineers?

But sites and apps are, at best, as fast as they used to ten years ago. In most cases they are even slower, see how clever these greedy AI hyping cronies are, whose leaders are running to abuse great civilizations forever?

Corruption Education Infrastructure Lifestyle Linux Media / PR People Politics Software Startup Technology Telecom World विश्वगुरु

Escaping the AI & Hype Garden

AI helps smart fascists and its strategic partners (लाभार्थी) in storing / staring live data via analytic dashboards to punish anyone instantly for non-conformity or opposing their ideas / diktats to secure Führer’s character anyhow, today’s MODIfied India is a developing case study, while west’s social media brands, global tech giants and fully-bonded political terrorists are co-sponsors!

Economy Lifestyle Media / PR People Politics Software Startup Technology Telecom World विश्वगुरु

AI Free Digital Prime – Natural & Green

Prime helps you create non-AI apps and bots for secure use. With naturally down-to-earth green technologies, tools and easy-to-use interfaces, you can customize personality of your apps, bots, sites and provide instructions for an engaging and personalized experience for your customers and visitors.

Lifestyle Linux Media / PR Software Startup Technology

Branded homes in gated societies

The real estate sector has been seeing a slump in growth for quite some time now. However, while that is the case with the sector in general, there is at least one segment which seems to be bucking the trend – branded homes in gated societies!

Architecture Builders Infrastructure Investment Law / Legal Lifestyle Listing Real Estate

Remembering MahaShivaratri = beaming as much wisdom to fellow beings ॐ 🙏

The one who is here before the start of time and will be there after the end of everything, something beyond time, infinite. It also represents holding infinite energy in Cosmic form.. “एक छोटा, लालच,चोरी, सत्ता, भ्रष्टाचार, घृणा और क्रोध सै पीड़ित अज्ञानी दिमाग एक बड़े शुद्ध और स्वयं गरीब दिमाग की तुलना में कम प्रशंसनीय है।” – विनाशकारी भक्तामर स्तोत्र

Art Lifestyle People Politics World विश्वगुरु

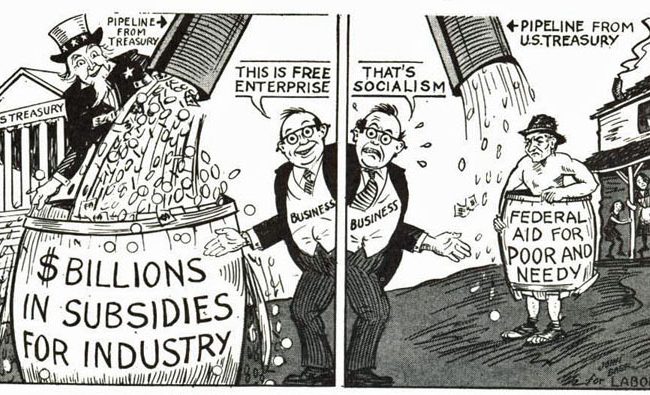

Data Mining of Digitized Nazi Capitalism

Capitalists vs other ideologies is always Geo-neutral and funny. They plan harming each other for profits of its own gang, and still expect to enjoy flower garlands as return gift birthright now or when the war dust settles..

Banking / Finance Corruption Economy People Politics